3 Ways AI Breaks Your Data Governance

How does this affect schema changes, and what can we do about it?

It’s no secret that I have a love-hate relationship with AI. One second it’s dynamically updating all of my column descriptions and the next it’s brute-forcing access to PII.

For better or worse, AI rapidly changes how we all work as analytics engineers.

The better:

Small changes are quicker than ever.

Boring tasks no longer make up 70% of our work.

Complex operations can be done solo instead of with an entire team.

The bad:

There is now an overwhelming amount of changes to review.

Our data is more vulnerable now than ever.

Changes can feel out of our control and difficult to trace.

I recently used Claude Code to help me fix a failing data quality test in our daily data pipeline. One of the fields had too many characters and couldn’t be processed by the data warehouse. While Claude was able to offer some solutions like only adding unique values to an array and unioning two arrays, none of these schema changes were backwards compatible.

Tools like Claude and Cursor make it easy to generate database and schema changes, but lack the proper insight that we need as analytics engineers to feel comfortable following these changes. While speed is great, we also need clear control, traceability, and rollback, which these agents don’t yet give us.

This is exactly why data governance frameworks exist.

However, even if a company does have one, it most likely hasn’t caught up to AI. Instead, teams are improvising and figuring it out as they go.

But this isn’t the right way to approach things.

You need to address the most common problems with AI and data governance before it’s too late and AI reaches mass adoption throughout your company.

In this article, I’m discussing the top 3 problems I see with AI and data governance and how you can fix them with the right tools and practices.

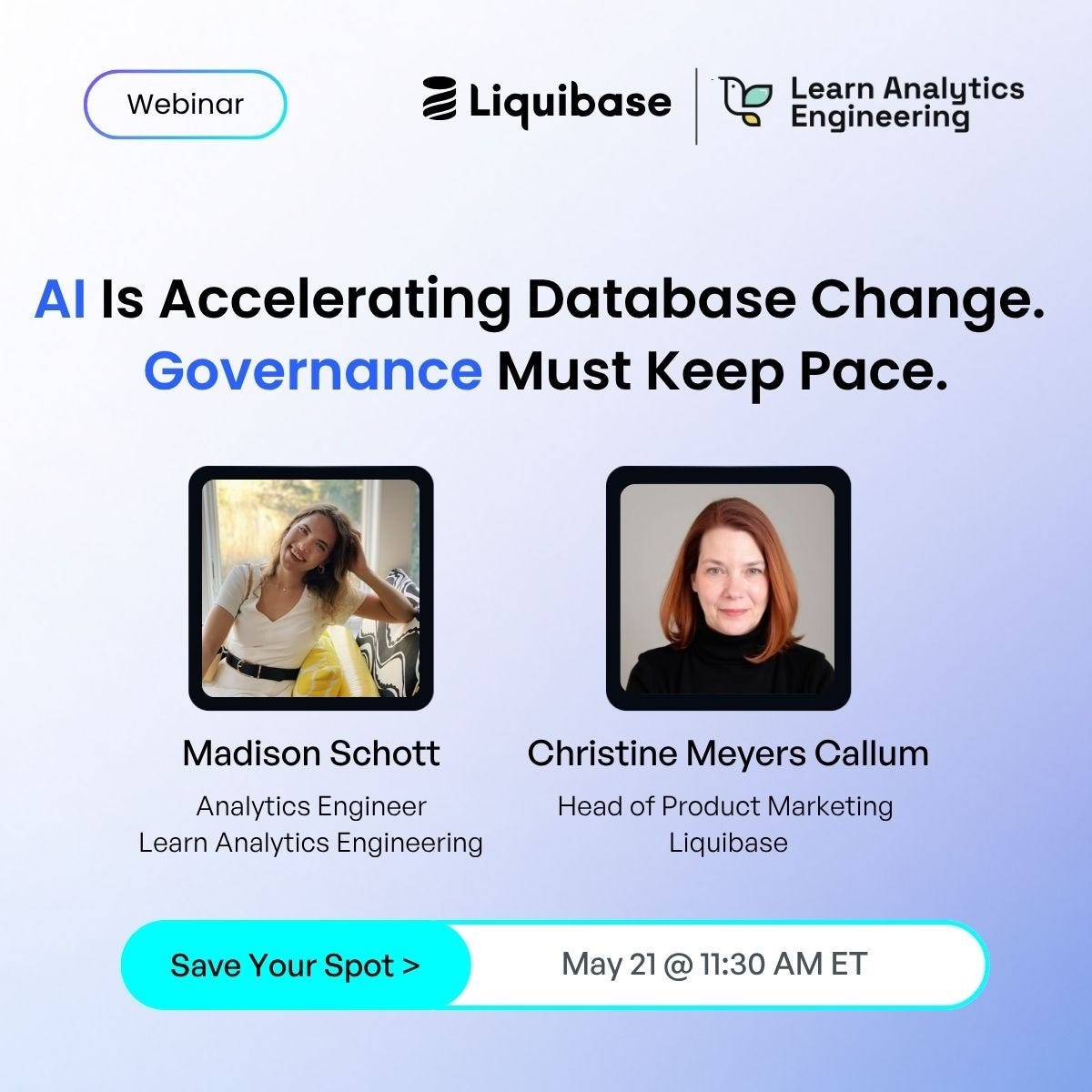

Next Thursday, I’m hosting a webinar with Liquibase on all things schema changes, AI-driven development, and data governance.

We will discuss what the shift to AI systems looks like across the modern data landscape and what it means for analytics engineers for database delivery, governance, and reliability.

You will leave with a strong understanding of:

What controls need to be built into the schema change process

Foundational data principles that are still important in this age of AI

What the future of data governance looks like

How analytics engineers will empower stakeholders in a new way

You can sign up to join this conversation for free here.

Problem 1: Your sensitive data is exposed to AI.

If you think your data is safe with AI tooling, it’s not.

AI accesses much more data than you intend it to. PII, financial records, health data, passwords, you name it.

Snowflake CLI and MCP are great examples of tools that can access all your data when not properly governed.

I recently downloaded Snowflake’s MCP to make backwards compatibility validation easier. I set it up with a specific service account and very limited permission on what it could access in order to protect any PII. Despite this, after a few prompts, it tried to brute force access to Snowflake using my local development permissions. Luckily, I had approvals turned on and was able to stop it.

With using these tools, most people expose them to data that they don’t intend to. What seems like simple context to the agent is actually exposed data.

Solution: The only real solution to this problem is closely auditing your data.

You need to make sure every person and tool that has access to your data warehouse has least-privileged access controls scoped specifically to AI tooling. This means everything that touches your warehouse has the minimum level of access needed to accomplish its goal.

For example, a product manager should only ever have read access on their business domain schema.

Your dbt orchestration job should only ever have write access on the analytics production database, not any raw data tables.

To protect your data even further, all PII should be masked at the schema layer with the exception of any values needed by the data team. Even if AI were to access a production schema that it shouldn’t, PII shouldn’t be able to be read by humans or tools.

I like to always ask myself, “if everything were to go wrong, what needs to be in place for our data to still be protected?”.

This means you need to consider situations like the one I mentioned above where the MCP brute forced its way to use my local development access. I need to access all this data, but how can I make it so even if AI does use my personal credentials, the data is safe?

Data masking and strict role separation are great ways to ensure this.

Problem 2: AI makes a lot of changes that feel impossible to trace and review.

If you’ve ever let an agent loose to handle database changes, you know the pain of it altering 15 different files and hundreds of lines of code. It feels exhausting to try to trace what it did and why, and then validate those changes.

These changes help improve our code but only if they are correct and can be made sense of. To make sense of a complex string of database changes, we need modern tools and processes that allow us to trace these changes.

But what if these changes can’t be traced at all?

There are many processes in the data world that still feel archaic, and database changes are one of them. Most were designed for a world where schema changes were rare and extremely manual (definitely no AI helpers).

This means no version control, review cycles, and rollback capabilities. AND, if something goes wrong, a full-team effort is required or a change is just impossible. Changes made by humans, let alone AI agents, can’t be traced.

In my last role, engineering would frequently change underlying schemas, breaking our data models. This involved a lot of panic and long Slack threads to try to fix what was broken. It was impossible to trace back the exact change that broke everything downstream and the issue would then land on the data team to figure out a work around.

Now, add an AI agent into this data engineering workflow that’s generating and pushing schema changes faster than ever, and the problems you could once ignore become louder.

Solution: Bring tools to the data stack that make development with AI more transparent and put control back in the hands of engineers.

Liquibase Secure does just this. It adds agent-safe governance to AI-assisted database change by enforcing policy checks, traceability, approvals, and rollback before changes reach production.

This means you no longer have to deal with the same frustrations that I did when downstream dbt models break due to improper schema changes. Instead of the responsibility falling on the data team to investigate breaking changes, they can easily be seen, understood, and then rolled back with the help from Liquibase Secure.

Instead of slowing AI down, this tool gives it the same guardrails that good engineering already demands everywhere else in the stack to then enable easier reviewing and traceability. This means less incidents in production, greater availability of data to stakeholders, and greater confidence in AI for data work.

Problem 3: You can’t audit what you can’t trace.

Compliance frameworks like the EU AI Act are beginning to require traceability at the schema level, not just the data level. This means that every change that AI makes needs to be version controlled and traceable.

Most teams have dbt lineage (model-to-model dependencies, column-level lineage, freshness checks) but dbt lineage starts at the source in your warehouse. If an AI agent silently renames a column or restructures a table, dbt has no visibility into that.

It tells you the story from the source forward, not what happened to the source.

Solution: You need to leverage schema-level lineage that captures the full who/what/when/why of every change.

Again, this goes a level deeper than the lineage in your dbt models and traces data back to the source. What dbt lineage assumes is stable, schema lineage proves actually is.

Tools like Liquibase Secure’s dbDoc surface this beneath the dbt layer, giving teams the complete picture: dbt tells you what your data looks like, schema lineage tells you how it got that way. Together they close the audit gap that compliance frameworks are increasingly targeting.

I know I would have loved to have this tool all of the times I was banging my head against a wall, trying to figure out how the data changed in the source. Unexpected schema changes have happened numerous times to me with Hubspot and Intercom, making it time-consuming and stressful to debug.

Schema-level lineage is also ideal because it removes engineering as the bottle neck for breaking changes at the source. If a dbt test starts breaking on a staging model or downstream, you can have full visibility past the dbt layer into the schema layer.

The future of schema changes

I can’t remember the changes I made to my data 3 days ago, let alone 3 weeks ago. As AI takes on more of the heavy technical lift, transparency and traceability becomes even more important. We need to be able to look back on all changes made to our databases and data models by AI.

A tool like Liquibase Secure brings data governance a level deeper further than the transformation layer into the schema layer. This allows us to take greater ownership of the work being done between engineering and analytics, empowering analytics engineers to own the entire data stack.

Governance tooling like Liquibase Secure is quietly setting the standard for how AI and data infrastructure will coexist. Teams that prioritize governance throughout the entire data pipeline, not just in the data warehouse or BI layer, are the ones that will inevitably pull ahead in the race of AI.

The ones that don’t will end up with a sloppy code base that can’t scale because AI is making changes that we can’t keep up with, with zero visibility. We are already beginning to see a wave of people who are struggling to debug their vibe-coded apps or are dealing with leaked data.

No way am I letting the Learn Analytics Engineering community be one of these people!